Design an on-call schedule that keeps developer burnout at bay

Posted Jun 26, 2017 | 6 min. (1093 words)Does your on-call schedule need a health check?

If your team is tired, or you find the only solution for late night errors is to hijack repository permissions to stop the spread of the fire, it might mean taking another look at your process. We think designing an on-call schedule that keeps your team healthy and happy is so important we wrote a book on how to encourage better on-call processes.

Would you feel confident and prepared if your application went down at three am tonight?

Many development teams aren’t.

In this article, I’ll walk through the main considerations of designing a fair and efficient on-call schedule, with some examples from Raygun’s own on-call process (that we’ve been running successfully for over five years).

Raygun lets you detect and diagnose errors and performance issues in your codebase with ease

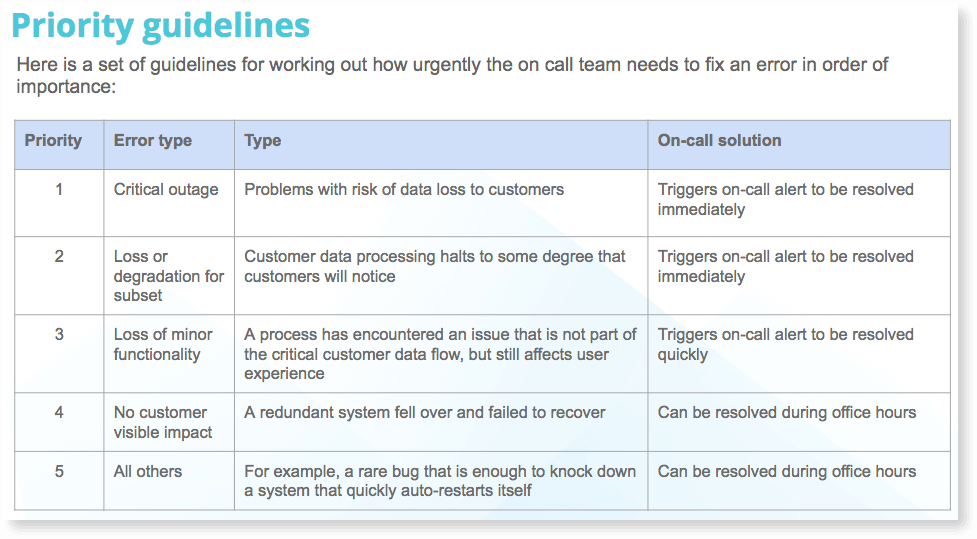

Establish priority levels of incidents

What’s your definition of a critical outage versus a rare bug?

Predefine “critical” clearly so there’s no confusion. Jason Hand from VictorOps recalls his first thoughts around his company’s triage process:

“I see something in the message that says ‘CRITICAL’ and I can only wonder to myself: I__s it really critical? Because I’m concerned my version of critical may somehow be different than your version of critical.”

Jason Hand- VictorOps

At Raygun we keep it simple – any risk of data loss to customers is our highest priority. Anything that has no customer visible impact can wait until morning.

Here’s how we define our priorities in more detail:

2. Choosing your on-call engineers and keeping the on-call schedule fair

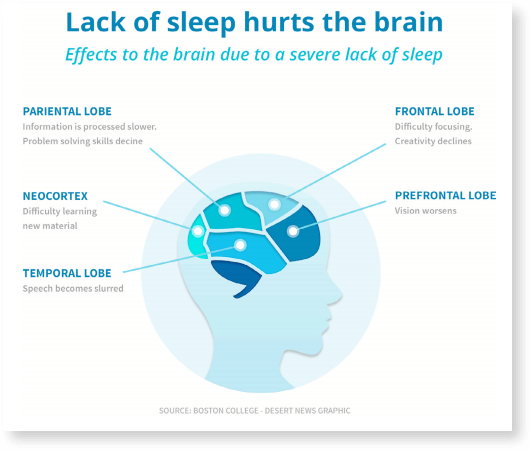

Would you let a jet-lagged developer near your infrastructure? Probably not. It’s true, lack of sleep does hurt the brain.

Usually, senior engineers and system admins are responsible for doing whatever it takes to keep the application running smoothly. This includes being on-call.

For our on-call schedule, there are two engineers on-call at any one time, the Primary and the Secondary. Raygun rotates the on-call engineers to work one week at a time, so each will be Secondary for a week, then Primary the following week. They both then they have a break until their turn comes around again.

Two weeks is a good balance – any longer means burn-out.

The current Primary is the first to receive incident alerts. The Primary must mark the incident as “ACK” or “acknowledged,” then set to work on resolving the issue.

If the Primary doesn’t acknowledge the incident in a reasonable timeframe, set your incident management software to alert the Secondary.

At the end of a rotation, an on-call hand-off meeting occurs once per week, where we discuss incidents that occurred, what might need to focus on, and how to resolve recurring problems.

3. The alerting process and identifying what has gone wrong

So that’s how to decide who goes on-call, and what a fair on-call schedule looks like, but how can you set your on-call team up for success when a truly critical error does happen?

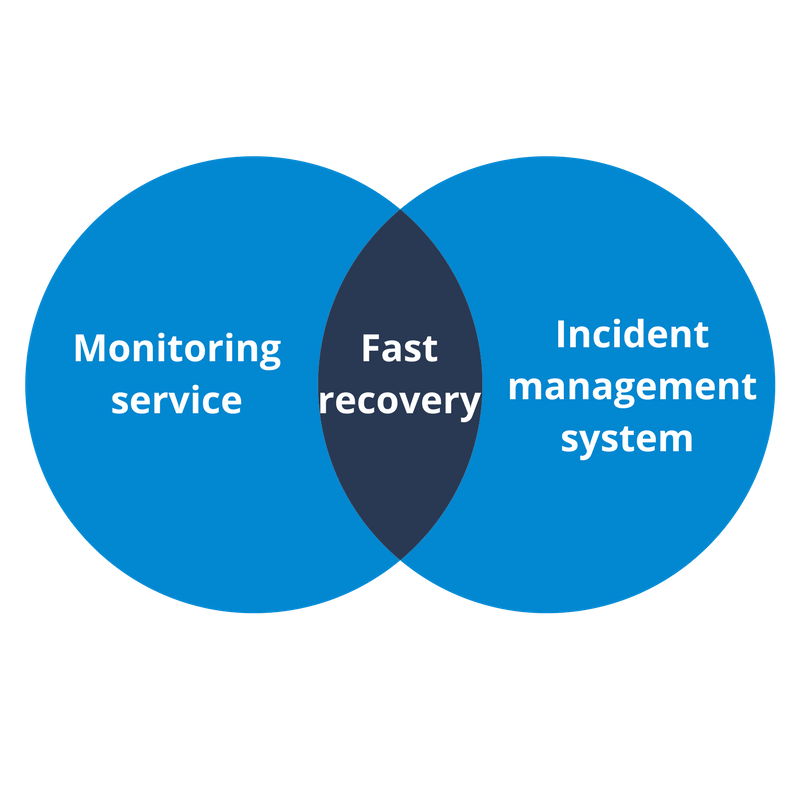

Like most development teams, we rely on tools to help do the heavy lifting.

If your system does go down, we recommend the following workflow:

Alert raised

At Raygun, if two monitors trip, an alert is raised to the Primary.

Triage the issue

The Primary always resolves the issue, unless they Primary doesn’t know how to, in which case the Primary alerts the Secondary.

Identification

Next is the process of figuring out what is going on. If you’ve set up monitoring well, any description attached will be enough to understand what’s going on.

Investigation

Drill down into the specifics of what’s going on. If you have Raygun Crash Reporting, check your dashboard (this is where monitoring tools shine. For example, Raygun will tell you the exact line of code the error occurred on.)

Resolution

Use your tools to hand to resolve errors – a cheat sheet is helpful.

Postmortem

Further investigation to find out what caused the issue, and a quick debrief of the issues. Check your metrics history to isolate problems (and remember the blameless culture, spoken about in more detail below.)

Documentation

Document an organized timeline of what happened and what actions were taken to resolve the issue with the goal of making improvements. (This gets logged automatically if you are using the Slack or HipChat integration.)

4. Developing a friendly on-call culture

Jason Hand recognizes “blameless culture” in successful on-call teams. He iterates in this article that removing blame gets to the heart of issues much faster, and as a result, no-one withholds information.

Jason recalls one particular incident of being on-call here:

“What I thought would be an opportunity for shaming was transformed into an opportunity for learning. A “Learning Review.” A coordinated effort to understand what took place in as much detail and exactly how events unfolded. By removing blame, we skipped right to a greater understanding of the facts and the specifics of what took place and in what order. Nobody withheld information.”

5. What about your affected customers?

Finally, there’s no need to risk a wider loss of confidence in your community.

In 2015, Slack went down for two and a half hours, creating a media frenzy.

Managing social media fallout after a large outage is tricky. The best way to manage social media is to minimize damage by being transparent. (We wrote about a few companies who didn’t do so well here.)

Many people head straight to social media to raise issues as they feel they may avoid support queues.

This is often a problem for software teams, as many times the people who monitor social media channels still need to find answers with internal teams before they can respond, yet customers still want to cram detailed support requests into 140 characters.

Error and crash reporting software monitors affected users for specific errors and crashes or poor user experiences – allowing you only to contact users that have been affected by a bug or underlying issue.

Design an on-call schedule that keeps your team and application happy and healthy

In conclusion, the process of getting alerted is only a small part of maintaining an effective on-call schedule that keeps your application and your team happy and healthy.