A guide to Apdex score: Calculations, improvements, and more

Posted Mar 25, 2022 | 11 min. (2279 words)Apdex scores focus and align the varying perceptions of different teams. If you ask people in your organization to define what “performance” means for the application you’ve developed and deployed, you’re likely to get different answers. An SRE engineer might argue that a performant application has the highest possible uptime. A designer might say a critical dimension of performance is how easily users can get tasks done thanks to a carefully-crafted UI. Product or marketing folks might say the only performance that matters is an application that attracts users, retains them, and keeps MRR rising.

And then there’s you, arguing that an application’s performance is its raw speed: how quickly it responds to input, executes backend code and queries a database, and returns data.

How does an Apdex score help? With all these definitions, each valid but skewed by individual priorities, how can a company agree on what “performance” means across multiple teams, applications, and services? Apdex gets teams to “measure what matters”: the user experience.

What are Apdex scores?

The Apdex (Application Performance Index) score is an open standard, developed and maintained by the Apdex Alliance, which defines three different types of users and their experience with an application:

- A satisfied user feels fully productive on your application due to a smooth, responsive experience that’s free of any slowdowns.

- A tolerating user may notice small amounts of lag or slowdowns, but continues to work around them without complaint.

- A frustrated user is actively thinking about not waiting for your application to respond or abandoning it altogether due to consistent lag or long waiting times.

Apdex measures the ratio of satisfied, tolerating, and frustrated users based on raw response time data. It converts raw data, which can be difficult to interpret, into human-friendly insights about real user satisfaction, which helps disparate (and often non-technical) teams align on new features or improvements that could improve scores. (In this respect, it fills a similar need to Core Web Vitals while measuring quite different indicators).

Because teams with the proper tools can monitor their Apdex score constantly, it’s a useful application performance metric for tracking user satisfaction over time, which unlocks all new possibilities, like tracking Apdex after every release to see whether the user experience is getting better (or worse).

How does Apdex work?

The Apdex formula first depends on defining a target response time for satisfactory users. There’s no standard number to apply here, as every application has a unique set of users with different tolerances for speed. For example: a doctor, who is used to a slow electronic health record (EHR) system might be satisfied with a response time of 15 seconds, whereas a security analyst working in a security operations center (SOC) might require dashboards to load in less than a second.

Let’s say you define a target response time of 1 second or less. To calculate your tolerating response time, multiply the target response time by 4, which means anything between 1 and 4 seconds. Anything above 4 is in the frustrated category.

To calculate your Apdex score, you take the sum of satisfactory response times, add that to half the tolerable response times, and divide by the total number of samples. You’ll always get a score between 0 and 1, 0 being resoundingly unsatisfactory and frustrated, and 1 being a satisfactory user experience.

Let’s say you took 100 samples with the following results:

- 70 satisfactory measurements (70 * 1 = 70)

- 15 tolerating measurements (15 * 0.5 = 7.5)

- 15 frustrated measurements (15 * 0 = 0)

A final Apdex score of 77.5, which is considered “fair” on the Raygun Application Performance Monitoring (APM) platform.

Why should you use Apdex?

Essentially, the Apdex score is an opportunity to see through your users’ eyes without having to conduct hundreds of interviews, watch endless session recordings, or have an enormous observability suite. You can turn those insights into new business practices and areas of improvement.

- Understand organic growth and retention: No matter what industry you’re in, your users determine whether your application grows organically and survives for the long haul. Holistically understanding the impact of performance on user satisfaction and retention is a crucial part of building a business that’s often neglected until it’s too late.

- Create focus with simplified data: Your time is limited, which means you need better ways to focus on the areas that help your company grow. The Apdex formula is a simple, universal measure that doesn’t need different interpretations across teams.

- Measure user experience over time: Many APM platforms can monitor Apdex continuously, and store historical data, to help understand whether user satisfaction is headed in the right direction and potentially correlate swings with new feature deployments, marketing efforts, or other business activity.

- Learn when to prioritize new features vs. performance: If development teams are focused on shipping fast, they’re more likely to introduce bugs or deploy code that isn’t fully optimized. If you see that Apdex scores are starting to drop, you might consider pausing new development for a while—even if customers are thrilled with your new features and planned roadmap—to optimize existing code and push response times back into satisfactory levels.

- Create service level agreements (SLAs) with customers: If you provide a mission-critical service to your users, they may want assurances that you’ll continue to prioritize availability and their user experience.

- Track every change to your application: Software developers use testing suites, CI/CD pipelines, and blue green deployments to reduce the risk of shipping buggy code to production. Apdex scores can serve a similar function, helping teams see where a particular deployment might have negatively affected (or improved!) the user experience.

What does Apdex look like in practice?

The first thing to know about using Apdex in practice is that you need to be able to monitor and collect response time data from your application as your real users are interacting with it. That means having an APM tool available for collecting data. Because of the simplicity of the Apdex formula, you could create a DIY solution for calculating one-off stores using raw data. But almost every business will find more value investing in a platform that collects and visualizes application performance monitoring metrics over time and through many releases (more on those choices later).

But here’s an example of Apdex in practice: Let’s say you develop a major update to your signup flow that requires a backend update alongside the release. After deploying the changes into production, your Apdex scores begin to fall—a vital signal that despite not deploying any functional changes to the user experience in your update, something about it is causing frustration among your users.

You eventually figure out that the backend blocks requests while attempting to complete a Stripe API request, which slows the entire application down and makes the overall experience feel far less responsive. With this vital information, you can scope and prioritize an appropriate fix, like implementing queueing in the backend to ensure requests from new users don’t impact existing users, instead of jumping into the next feature unaware of the negative experience.

Apdex scores help uncover hidden bottlenecks — often in dark corners where you might not be able to see them yourself — by translating the experiences of a growing user base into a metric that’s not only easily monitored, but also easily understood.

What’s a good Apdex score?

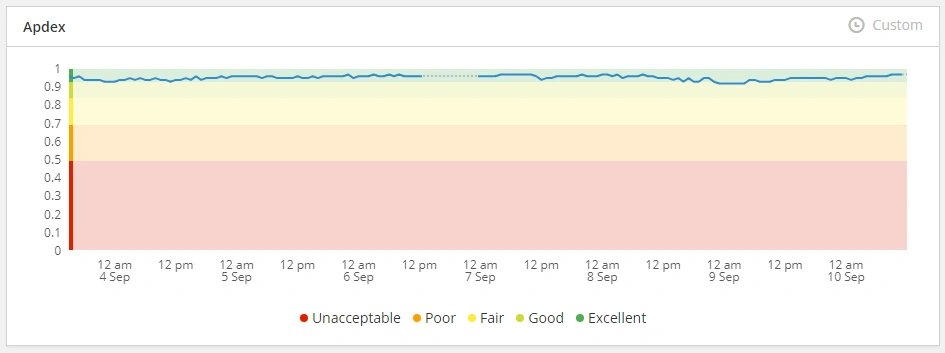

In short, you should aim for an Apdex score of 0.85 or better, which we define as “good” or “excellent.”

In practice, it’s a little more complicated than that. To achieve a good or excellent Apdex score, you need robust software development practices and plenty of ambition to improve your application’s user experience consistently. You also need to establish well-researched standards for satisfied/tolerating/frustrated that align with the context of your industry, target market, and the problem that you’re solving. Different segments of users have vastly varied expectations for performance, and it’s crucial to properly tune your tooling to create useful scores for the experiences your users expect.

You may also want to use different Apdex standards for different tasks/actions within your application based on the perceived value the user gets in exchange for waiting for a task to complete. Users are more than willing to wait a few seconds if it means getting something desirable at the other end, such as an order successfully processing when submitted. If they’re performing a high-repetition task, or something that seems like it isn’t worth it, higher scores are more important.

-

Excellent: 0.94 - 1 Users are fully productive and are not impeded by response time.

-

Good: 0.85 - 0.93 Users are generally productive and not impeded by response time.

-

Fair: 0.70 - 0.84 Users experience some performance issues, but are likely to continue the process based on what they get in return.

-

Poor: 0.50 - 0.69 Users experience a moderate amount of lag, but are still likely to continue.

-

Unacceptable: 0.0 - 0.49 Your application is so unresponsive that users abandon their task.

How can you improve your Apdex score?

There’s only one way to improve an Apdex score: Improve the responsiveness of your application.

But the reality of software development is that there’s often a tension between shooting for a very high Apdex score and shipping features and updates, or keeping users happy. It’s hard to know when to prioritize Apdex scores or divert your energy toward improving your product.

Here are a few ways to find the balance:

- Use Apdex scores as a metric for success with every release you deploy. Does shipping a particular feature improve or lower the score?

- Test Apdex scores during development, on staging servers, to potentially catch user experience issues before they go to production.

- Integrate work on performance issues into your processes, like devoting a weeklong sprint every quarter.

- Keep the score a core part of your development cycle’s considerations, since it may be harder to justify focusing a future sprint on improving them.

- Tie Apdex scores into key performance indicators (KPIs) for your development teams along with shipping functionality.

Tools can help you improve your Apdex score

Most companies get Apdex scores through their APM product, which generally provides far more context than raw response time data. For example, Raygun’s APM adds tracing capabilities to most applications via SDK support for .NET, Ruby, Node.js, and other languages, to visualize exactly how every request, query, or API call loads and progresses through your infrastructure.

We recommend using a combination of slow queries, slow traces, and slow API calls to determine if a problem will make a meaningful impact on your Apdex score. If a query runs for 3-5 seconds at a time, it’s probably worth investigating as one of potentially many culprits for slower response times. And Raygun works with both historical and real-time data, helping you both identify long-tail changes in performance and quickly troubleshoot a sudden drop in Apdex scores after a fresh deployment, for example.

APM products become even more important with modern development cycles, which promote heavy use of CI/CD pipelines and encourage fast-moving, rolling releases. If you’re pushing out code into the world daily, you’ll find the combination of holistic Apdex scores and nuanced tracing visualizations to be a powerful lever for improving the user experience.

The problem with Apdex scores

The simplicity of the Apdex score—how easily it’s calculated and understood by developers and non-technical employees alike—makes it tempting to name it as the performance metric that rules them all. Instead, Apdex should be used as a part of a wider monitoring stack and applied to the right contexts, not across the board as a measurement of success. Used correctly, Apdex scores are a fantastic way to get insight into platform-wide trends, but they shouldn’t be used in isolation.

Because Apdex scores are also high-level and holistic, they’re also not particularly useful for identifying specific issues that cause a degraded user experience. In the scenario above of the new user onboarding flow, discovering that the backend inadvertently blocks additional requests while processing new users would take a great deal of troubleshooting and testing—and even dumb luck—if you rely on the Apdex score.

This is why it’s so important to pair more nuanced APM tooling with your Apdex monitoring to ensure that you’re seeing the big picture and can dive into the details when required.

Apdex in action

Software development isn’t getting any easier, and more business-oriented teams want access to real-time data about your customers to make informed decisions about product design, marketing initiatives, or infrastructure overhauls. You can’t expect everyone in the company to align on reducing slow queries or understand the complexities of tracing.

That’s why Apdex scores fit this unique problem so well. Yes, they’re a measure of application performance, but they’re also a valuable business-wide metric that can help you get closer to your users and your own bottom line. Paying customers and their experience with your product determines whether or not they’ll stick around for the long haul, and Apdex scores are a powerful tool for seeing beyond your own screen. When paired with a fully-fledged APM solution, it’s a transformative way of revealing areas for application improvement without constant digging.

And you can finally agree on what “performance” actually means: whether your customers already have one foot out the door, are just tolerating your application until a better one comes around, or are on the path to becoming satisfied product champions.

Raygun’s APM tool offers superhuman insight into the application stack and reveals areas for improvement without the need to go digging. If you haven’t tried it yet, it’ll transform the way you think about software development for the web. Try it for free today.