C# logging: Best practices in 2023 with examples and tools

Posted Apr 19, 2024 | 16 min. (3333 words)Monitoring applications that you’ve deployed to production is non-negotiable if you want to be confident in your code quality. One of the best ways to monitor application behavior is by emitting, saving, and indexing log data. Logs can be sent to a variety of applications for indexing, and you can then refer to them when problems arise.

Knowing which tools to use and how to write logging code makes logging far more effective in monitoring applications and diagnosing failures. The Twelve Factor-App methodology, which advocates for the importance of logging, has gained popularity as a set of guidelines for building modern software-as-a-service. According to the twelve-factor app methodology, log data should be treated as an event stream. Streams of data are sent as a sort of “broadcast” to listeners, without taking into account what will happen to the data that is received. According to the twelve-factor logging method:

“A twelve-factor app never concerns itself with routing or storage of its output stream.”

The purpose of this is to separate the concerns of application function and log data gathering and indexing. The twelve-factor method goes as far as to recommend that all log data be sent to stdout (also known as the ‘console’.) This is one of several ways to keep the application distinct from its logging. Regardless of the method used, separating these concerns simplifies application code. Developers can focus on what data they want to log, without having to worry about where the log data goes or how it’s managed.

Popular logging frameworks for the .NET framework help developers to maintain this separation of concerns. They also provide configuration options to modify logging levels and output targets, so that logging can be modified in any environment, from Development to Production, without having to update code.

Logging frameworks

Logging frameworks typically support features including:

- Logging levels

- Logging targets

- Structured (also called “semantic”) logging

NLog

NLog is one of the most popular, and one of the best-performing logging frameworks for .NET. Setting up NLog is fairly simple. Developers can use Nuget to download the dependency, then edit the NLog.config file to set up targets. Targets are like receivers for log data. NLog can target the console, which is the twelve-factor method. Other targets include File and Mail. Wrappers modify and enhance target behaviors. AsyncWrapper, for example, improves performance by sending logs asynchronously. Target configuration can be modified by updating the NLog.config file, and does not require a code change or recompile.

NLog supports the following logging levels:

- DEBUG: Additional information about application behavior for cases when that information is necessary to diagnose problems

- INFO: Application events for general purposes

- WARN: Application events that may be an indication of a problem

- ERROR: Typically logged in the

catchblock a try/catch block, includes the exception and contextual data - FATAL: A critical error that results in the termination of an application

- TRACE: Used to mark the entry and exit of functions, for purposes of performance profiling

Logging levels are used to filter log data. A typical production environment may be configured to log only ERROR and FATAL levels. If problems arise, the logging level can be increased to include DEBUG and WARN events. The additional context provided by these logs can help diagnose failures.

Here’s an example of some code that logs using NLog:

namespace MyNamespace

{

public class MyClass

{

//NLog recommends using a static variable for the logger object

private static NLog.Logger logger = NLog.LogManager.GetCurrentClassLogger();

//NLog supports several logging levels, including INFO

logger.Info("Hello {0}", "Earth");

try

{

//Do something

}

catch (Exception ex)

{

//Exceptions are typically logged at the ERROR level

logger.Error(ex, "Something bad happened");

}

}

}Log4NET

Log4NET is a port of the popular and powerful Log4J logging framework for Java. Setup and configuration of Log4NET is similar to NLog, where a configuration file contains settings that determine how and where Log4NET sends log data. The configuration can be set to automatically reload settings if the file is changed.

Log4NET uses appenders to send log data to a variety of targets. Multiple appenders can be configured to send log data to multiple data targets. Appenders can be combined with configuration to set the verbosity, or amount of data output, by logging level. Log4NET supports the same set of logging levels as NLog, except that it does not have a built-in TRACE level.

Logging syntax in Log4NET is also similar to NLog:

private static readonly ILog log = LogManager.GetLogger(typeof(MyApp));

log.Info("Starting application.");

log.Debug("DoTheThing method returned X");ELMAH (Error Logging Modules and Handlers)

ELMAH is specifically designed for ASP.NET applications. It is fairly easy to set up, and includes a dashboard application that can be used to view errors. ELMAH is popular and has been available for a long time, however, it doesn’t really follow the twelve-factor method. ELMAH saves data to databases, including MySQL, SQL Server, Postgres, and others. This method mixes concerns of logging with concerns around log persistence. Log data is stored in a relational database, which is not the optimal storage method for logs (we’ll talk about a better way in a moment.)

What and how to log

Separating the concerns of log management from logging simplifies application code. However, developers must still write logging code. Effective logging can make an application highly supportable. Poor logging can make it a nightmare for operations teams. It’s important for developers to know what data to log, and to use patterns for logging that can be enforced across an application.

Log levels

Generally speaking, the more you log, the better off you will be when problems arise. Logging everything is good for troubleshooting, but it can lead to disk usage issues. Large logs can be difficult to search. Logging levels are used to filter logging output, tailoring the amount of data output to the situation in hand.

Each logging level is associated with the type of data logged. DEBUG, INFO, and TRACE events are typically non-error conditions that report on the behavior of an application.

Depending on how they are used, they are usually enabled non-production environments and disabled in production. Production environments typically have ERROR and WARN levels enabled to report problems. This limits production logging to only critical, time-sensitive data that impacts application availability.

Developers have some freedom in precisely how to use each logging level provided by a given framework. Development teams should establish a consistent pattern for what is logged, and at what level. This may vary from one application to another but should be consistent within a single application.

Logging best practices

Debug logs typically report application events that are useful when diagnosing a problem. Investigations into application failures need the “W” words: Who, What, When, Where and Why:

- Who was using the system when it failed?

- Where in the code did the application fail?

- What was the system doing when it failed?

- When did the failure occur?

- Why did the application fail?

“Why did the application fail” is the result of a failure investigation, and the purpose of logging. Logging targets typically handle the “when” with timestamps added to the log entries. The rest of the “Ws” come from logging statements added to the code.

| Question asked | Purpose | Example |

|---|---|---|

| Who was using the system when it failed? | Identify the user or entity responsible for triggering the error or issue. | An e-commerce platform could log the user ID, IP address, and browser type for a failed payment transaction. Doing so would help the support team identify whether the user experienced the issue due to a technical problem or a user error. |

| Where in the code did the application fail? | Determine the location of the error in the source code. | A mobile app could log the method name and line number where a crash occurred, helping the development team isolate the root cause of the crash and fix the issue. |

| What was the system doing when it failed? | Understand the context and state of the system at the time of the error. | A game application could log the player’s location, game level, and score when a gameplay error occurs. Subsequently, developers can reproduce the error and diagnose the cause. |

| When did the failure occur? | Identify the time and duration of the error. | A server could log the timestamp and duration of a network outage. With this information, the operations team can identify patterns and trends in system failures and plan for system upgrades or maintenance. |

| Why did the application fail? | Determine the root cause of the error or issue. | A web application could log the SQL query that caused a database error, allowing developers to identify and fix the issue and prevent similar errors from happening in the future. |

There are two practices that will help make logging more effective: logging context and structured logging.

Logging context means adding the “Ws” to log entries. Without context, it can be difficult to relate application failures to logs.

This is a common log statement:

try {

//Do something

}

catch(ex as Exception) {

logger.Error(ex);

throw;

}The exception object in this example is sent as a log entry to the logging target(s). This is needed, but there’s zero context. Which method was executing (where did the application fail)? What was the application doing? Who was using the application?

Both NLog and Log4NET have features that help add this contextual information to logs, making them far more useful. The contextual data is added as metadata to the log entries.

Structured logging means formatting the data that is logged in a consistent way. NLog and Log4NET both support layouts. These are classes and other utilities that format logs and serialize objects into a common format. Structured logs can be indexed much more effectively, making them easier to search. More on contextual vs. structured logging later on in this post.

Where to send log data

Earlier, we mentioned that relational databases are not the best place to send log data. Time-series databases (TSDB) are much more efficient at storing log data. TSDB require less disk space to store events that arrive in time-ordered fashion, as log events do. Open-source TSDB such as InfluxDB are much better suited to storing log data than relational databases.

The ELK stack is a popular solution for log aggregation. ELK is an acronym that stands for Elasticsearch, Logstash, and Kibana.

Elasticsearch is a fast search engine that is used to find data in large datasets.

Logstash is a data pipeline platform that will collect log data from many sources and feed it to a single persistence target.

Kibana is a web-based data visualizer and search engine that integrates with Elasticsearch.

These and other open-source and paid solutions allow developers to gather all logging to a central system. Once stored, it’s important to be able to search logs for the information needed to resolve outages and monitor application behavior. Logging can produce a lot of data, so speed is important in a search function.

The problem with logging tools

Dedicated logging tools give you a running history of events that have happened in your application. When a user reports a specific issue, this can be quite unhelpful, as you have to manually search through log files.

It’s extremely easy to get into the headspace of “let’s log everything we possibly can”, but such a mindset can be counterproductive to your end goals. Logging is efficient, but it isn’t free.

Each additional thing that you decide to log comes at a very slight performance cost. Add enough of these slight drops in performance together, and you’ve got a real problem. Ironic, right? You can also end up overwhelming yourself with information, clouding the useful information with a sea of fluff and noise.

Having focus and knowing your priorities can help you to keep your logging in-check, and help you to avoid these common pain-points.

What are the drawbacks of logging and log files?

-

Logging is helpful for developers to detect bugs and issues in their code; however, there are a few drawbacks to C# logging and log files that developers should be aware of:

-

Logging can affect the application’s performance, causing performance overhead if logs are written synchronously to a file.

-

Sometimes a log file may contain sensitive information such as passwords, keys, or other confidential data, creating a risk of unauthorized access. Developers should ensure that no sensitive data is logged in the log file.

-

The size of the log file will continuously grow over time, making it difficult to maintain and taking up a large amount of disk space.Therefore, developers need to plan the management of log files to prevent unnecessary storage usage.

-

Including logging code can sometimes make the project complex, making it harder to maintain in the future.

Contextual vs structured logging

There are two types of logging available in C#: contextual and structured logging.

Contextual logging provides information on the event context, typically including information such as timestamps, message origin, and log levels. Contextual logging helps users understand the event sequence that causes errors or issues in the system.

On the other hand, structured logging is a modern approach that provides a more structured and efficient way to log data, using a defined schema for logging helps users to query and analyze data easily. In structured logging, each log message is associated with a set of properties that helps to filter and sort log data.

Contextual and structured logging have their differences, and they also have their own benefits. Using the proper technique for logging depends on the use case and project properties. Contextual logging provides useful event context, whereas structured logging provides a more flexible and scalable way to store, query, and analyze data.

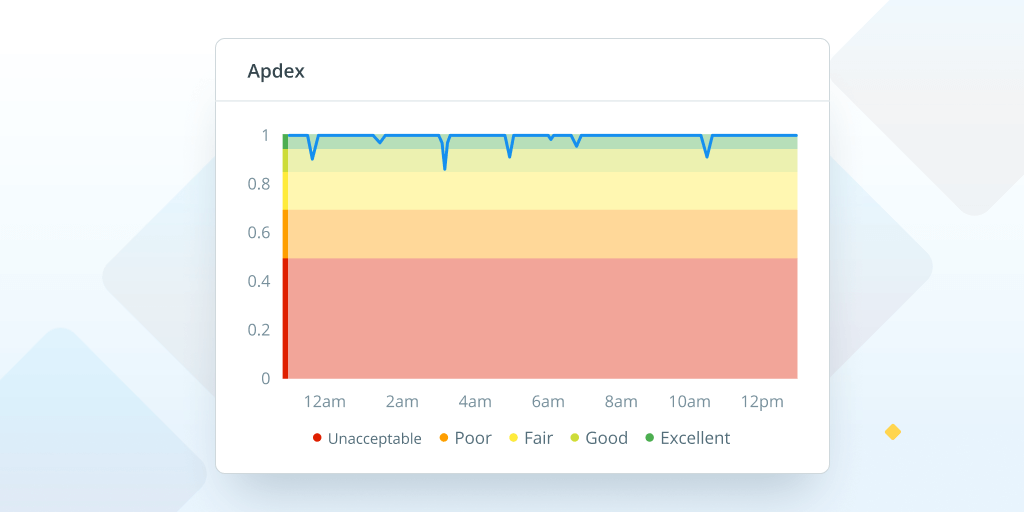

Error and log monitoring

For better error or log monitoring, the developer needs to keep an eye on the error rate, resolved and new errors, and query log. The error rate is the number of errors that occur in a specific period of time and is usually expressed as a percentage of the total number of events and requests processed.

To measure the error rate, developers can use error tracking and monitoring tools like NLog, Log4Net, or more sophisticated options like Crash Reporting. The error rate is a critical indicator of system health. Sometimes errors are interconnected, and solving one error may cause the occurrence of another.

Fortunately, there are many C# logging frameworks available to help developers handle this kind of situation by querying and monitoring C# error logs over time. By using modern logging techniques like structured logging, developers can filter and sort log data for better analysis of system health. This approach also helps to find the exact error in a shorter amount of time.

Crash reporting vs. error logging

Dedicated error and crash reporting tools, like Raygun, focus on the issues users face that occur when your app is in production. They record the diagnostic details surrounding the problem that happened to the user, so you can fix it quickly with minimum disruption.

Logging and crash reporting tools are different and should be used to complement each other as part of a debugging workflow.

You can pair logging with error and crash reporting tools to capture full context surrounding errors and crashes, recording relevant events leading up to the crash. This can hugely accelerate the process of understanding the series of actions or events that led to the crash, and reproducing and diagnosing the issue more effectively.

A quality crash reporting tool will also provide full diagnostics, right down to the line of code. Logs can work alongside this to provide insights into error conditions, exception messages, or specific actions performed by the user, helping identify the root causes more accurately.

Teams can also combine crash reports and logs to identify recurring issues or patterns in their application’s behavior.

On the other hand, logging is much more time-consuming without crash reporting and error monitoring for several reasons:

-

Limited visibility: Without monitoring tools, you need to identify and insert log statements throughout the codebase manually, anticipating potential areas where errors may occur. This is especially slow and risky in complex applications with many code paths.

-

Lack of context: Crash reports and error monitoring tools provide essential contextual information like stack traces, device information, and application state at the time of the error. If you rely on logging, you’ll have to spend additional time investigating and reproducing the error to gather relevant details manually.

-

Debugging complexity: Without crash reports and error monitoring, developers may need to sift through extensive logs, searching for relevant log entries or clues to identify the cause of an error. This takes time, particularly when dealing with large log files or complex system interactions.

-

Limited scalability: Manually analyzing logs for errors can become a nightmare as the application grows in complexity or user base. Without automated crash reporting and error monitoring, you’ll struggle to handle a high volume of logs, leading to delays in identifying and addressing critical issues.

Logging target types

Database

It is simple to consolidate logs distributed across multiple machines, because all C# error logging files are stored in the database and can easily be searched. A diverse array of databases can be used, based on the requirements of an application.

For example, users may choose relational databases like MySQL and PostgreSQL that are easy to install and effortless to query using SQL commands. You can also use NoSQL databases such as MongoDB and Cassandra, ideal for structured logs stored in JSON format.

Finally, you have the option of selecting time-series databases as well. Time-based events are best stored in databases like InfluxDB and GridDB. This means that your logging performance will improve, and your logs will take up less storage space. It is particularly suited for intensive high-load logging.

Error monitoring tools

Error monitoring tools are among the most effective logging targets, because they not only display error messages but also save the event logs related to those messages. It’s especially helpful because you learn about similar categories of errors, such as the same HTTP method API call like GET, that can cause a problem and, therefore, can help you avoid them in your application. Raygun is a well-known example of an error monitoring tool.

Output Console

Logging to the output console is a very convenient practice because it enables you to rectify any errors during development easily. In addition, Windows provides a similar logging target called Debug Output, which you can log into using System.Diagnostics.Trace(“Start recording”), where you can view the live changes made in the C# logging files of the system and can link that data to the database.

Files and Database

Files and databases can both be logged. It is also one of the best options because all changes made to the files are directly logged in the database. It is useful when an application reports an error in a log file reflected in the database, where it helps you track the event logs and identify the error quickly.

However, it is not required to log them together. Some C# logging frameworks allow them to be logged independently.

Final thoughts on logging best practices

Effective logging makes a major difference in the supportability of an application. It’s important not to mix the concerns of logging and log storage. Popular logging frameworks for .NET provide this separation of concerns. They also provide features that make it easier to log consistently and to filter log data.

Open source and paid solutions for log aggregation are important tools, especially if you have many applications logging a large volume of data. With the right tools and skills, your developers can produce applications that they, and your operations teams, can support with relative ease.

While logging is a critical part of your development toolkit, it can be time-consuming and inaccurate when you need to solve a problem fast. Logging should be used in combination with a Crash Reporting tool for far greater speed and accuracy in debugging. Take your logging practices to the next level with a 14-day free trial of Raygun Crash Reporting.