The dark art of prioritization and more: Tech leaders weigh in on software quality

Posted Aug 13, 2019 | 6 min. (1153 words)The Tech Leaders’ Tour is a series of events bringing tech leaders together to learn from each other about improving software quality and customer experience.

Every software professional in a leadership role is concerned about the caliber of software that gets into the hands of customers. Questions like, is the new app slow to load? Is it working as it should? Why has churn increased? Are natural consequences of building software, yet we don’t always get the answers we need.

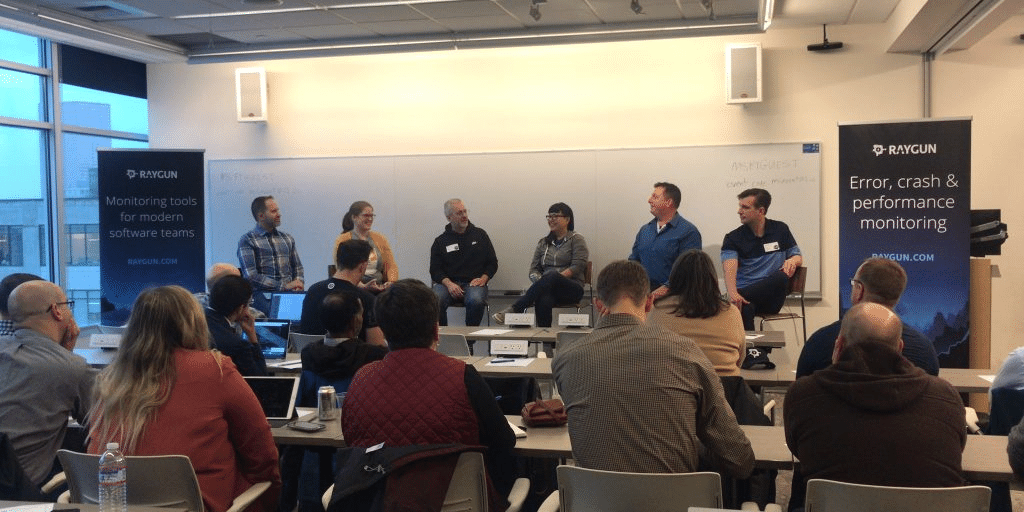

In our inaugural Wellington Tech Leaders’ Panel, we brought over 40 years of software leadership experience together from Trade Me, Xero, Raygun, and Sharesies to get an insight into how some of New Zealand’s best and brightest are delivering excellent software quality.

Our host, Investor and Director Serge Van Dam, kicked off the conversation with the provocation of how software can mature as an industry and maintain an excellent standard of growth so we can be more impactful in the business world.

Here are how our panelists responded in five key points.

1. Measure software quality using the number of bugs and errors

When we asked Sonya Williams, Co-founder and Chief of Product at Sharesies, about what software quality means to her team, Sonya replied at 11:45 that “getting high-quality software in the hands of our customers” is the priority. “This means it works as they expect, that it solves their problem, and that it’s reliable. We look at usage, adoption, and if customers are using it like like we thought they would.”

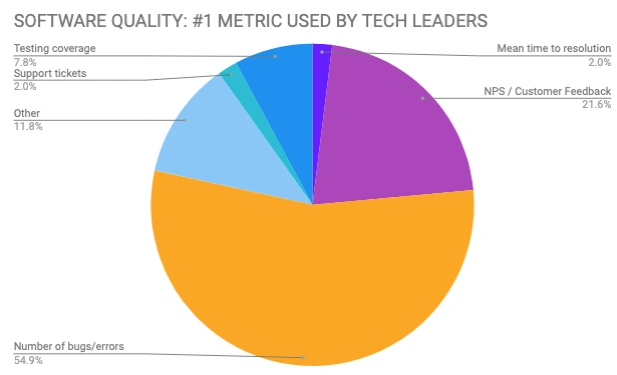

Even the best teams are still figuring out what the best metric is for gauging software quality, but the majority of our invite-only audience measure on the number of bugs or errors introduced over a certain timeframe.

At 15:20, we learn how Zheng Li, Director of Product at Raygun, uses software errors as a measure of software quality. “We’ve had quite a few Directors of Engineering and each person has had a different view on what to track (as a measure of software quality.) In the end, we decided that the things to track are how many bugs have been introduced since a new deployment has gone in and how many have been fixed, what is the cycle time, are we getting better at what we’re doing, more efficient, and are we actually solving bugs as we go?”

Measuring software quality on bugs and errors seems to be the consensus from our audience, too.

2. Defining and communicating technical debt is a challenge, but not impossible

The topic of technical debt is a recurring theme in our Tech Leaders’ Series. At 17:25 in the video, Simon Young, Chief Product and Technology Officer at Trade Me, used the analogy of a credit card to help communicate the inevitable tradeoff between speed vs. quality.

“(Tech debt) is a tool to getting something done, the same way you’d use your credit card. You want to buy the thing, you want it right now, and you’re prepared to pay it off over time. But if you don’t pay off your card after a month, you start accruing interest. If you’re managing that debt well, then you can get good leverage over it, but if you’re just, you know, taking another credit card to pay off your old credit card, you’re in a whole lot of pain and you need to focus on that.”

3. NPS is the most popular measure of customer experience

While NPS was the primary metric used by tech leaders to measure customer satisfaction, we also know it’s fraught with problems. That’s why Zheng uses NPS alongside other metrics like site speed and usage metrics.

At 46:00, Zheng says that “when our site is slow, our NPS score dives by around half. So speed is very important. We need to make sure that our Raygun app is maintained at 4 seconds to load or quicker than that. When we introduce the new project or new feature, we send our customer success teams to go and talk to the customers after we’ve sent out all the marketing material, and we gauge what people think, and if it’s not the right thing, we quickly reverse.”

Gabe Smith, GM of Enterprise Technology at Xero, reinforces that to deliver a great customer experience, it’s also important for the team to be happy and getting access to career growth. At 44:02, Gabe says “It’s really important to get really good feedback. You know whether it’s about celebrating success or even around you know, maybe you should do that differently, or you should try and improve.”

4. Prioritize developer time between tech debt and user experience

At 23:59, Zheng shares that putting your users first should be the priority when deciding to allocate engineering time. “For me, it’s bugs introduced per thousand lines of code, bugs remedied per development cycle, and also the number of users affected.”

Xero also employs a similar strategy of prioritization. If a problem aligns with strategic vision, then that gets fixed first.

5. Connect engineers with the code to support quality software experience

The sentiment of bringing engineers closer to the code they write was echoed by Xero, TradeMe, Raygun, and Sharesies, but all employ different tactics. While Raygun and Sharesies both ensure engineers are on-call for support, Xero and Trade Me are more mature companies, and therefore they have support teams to manage support tickets from Intercom.

Check out the full panel

From how New Zealand’s rising stars measure software quality to keeping engineering teams happy, the Wellington Tech Leaders’ panel discussion had it all, so make sure to watch the full video, and express your interest for the next event.

Measuring success is tough but not impossible. If there is one key learning on measuring software quality, it’s that feedback tools like NPS are incomplete, and should be using data and direct customer feedback to build a story around the quality of your software.

To watch past editions of the tech leaders series, and get a rare glimpse at how companies like Alexa, Nike, Microsoft, AWS, Tableau Software, Raygun, The Standard, Xero, and Vend, monitor software quality, catch the recordings below.

- Auckland panel — How Xero, Vend, and Pushpay prioritize user experience and performance in their product development workflow

- Seattle panel - Amazon Alexa, Tableau, AWS, and Raygun cover customer feedback mechanisms for better quality software.

- Portland panel - Scott Hanselman leads a panel of speakers from Microsoft, Chef Software, Nike, and The Standard talking about tools, people, and processes when building world-class software.

- Auckland panel 2020 - Xero, Vend, Lancom, and Tend on prioritizing customer experience in today’s digital climate.

- Read the Tech Leaders’ Report and learn how other tech leaders measure software quality and user experience.