Core Web Vitals e-commerce analysis: part one

Posted Jun 2, 2023 | 8 min. (1533 words)Back in 2021, Google introduced Core Web Vitals, three criteria to measure if a website is fast, stable, and responsive enough to give visitors a good digital experience. These factor into search ranking and have a powerful influence on customer behavior. But while Google has been urging the web performance community ever since, many are still falling short.

We pulled data from the Chrome User Experience Report to conduct our own Core Web Vitals analysis, finding that even some of the largest e-commerce brands aren’t passing these thresholds. This is part one — find part two here.

In March 2022, an assessment of 1.8M prominent URLs across sectors found that only 38% were passing all Core Web Vitals. Google’s standards are clearly extremely challenging for even the most successful global businesses, but they’re also critically important as user expectations rise and attention spans get shorter.

For this report, we narrowed the scope down to the e-commerce sector, specifically health and beauty sites, as this is one of the largest and fastest-growing e-commerce subcategories. We collated and analyzed the Core Web Vitals data of 10 leading sites over a two-year period, to understand how the e-commerce industry is doing, how scores are trending, and identify what the best- and worst-scoring sites have in common.

This is part one of our findings, where we present an overview of key takeaways and analyze LCP for 10 e-commerce leaders. In part two, we wrap up with FID and CLS.

Note: As of March 2024, Google has replaced FID (First Input Delay), the original interactivity metric, with INP (Interaction to Next Paint). Learn about the transition, and what it means for development teams, here.

Need a full primer on Core Web Vitals and how to improve them? Grab our free Developer’s Guide to Core Web Vitals for your complete toolkit, including an actionable workflow and a comprehensive list of optimization techniques.

In this post:

- Overview of industry findings

- LCP: Highest scorers

- LCP: Lowest scorers

- Getting your scores and taking action

Industry overview

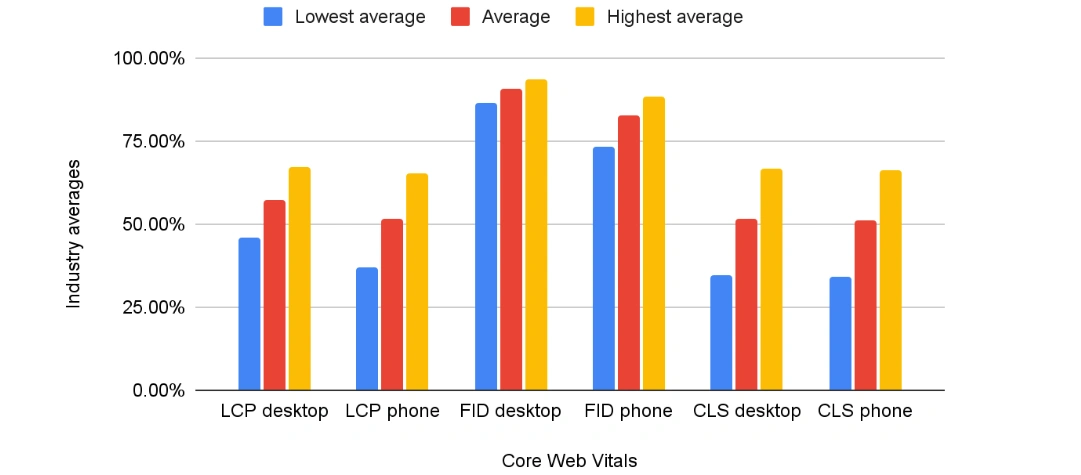

Across metrics: For the 10 e-commerce sites we analyzed, there was an obvious disparity between the attainability of the three Core Web Vitals. The industry average didn’t meet the 75th percentile threshold for Largest Contentful Paint (speed) or Cumulative Layout Shift (stability) on either desktop or mobile, while all sites performed strongly for First Input Delay (responsiveness) on both desktop and mobile.

Across devices: While LCP and FID results were better on desktop, CLS results were very similar on desktop and mobile.

Some optimization techniques and technology choices were very consistent across all sites:

- All bundle and minify CSS and JavaScript code, with 9/10 using Webpack

- All have at least HTTP/2 enabled (Beautycounter has HTTP/3).

- All except Young Living use a next-gen image format (in addition to the traditional JPG, PNG, GIF, and SVG): either AVIF (Beautycounter and Dollar Shave Club) or WebP (the remaining sites).

- All use GZIP compression for faster file transfer over the network (with the exception of Beautycounter).

- All utilize some caching, though the efficiency of the cache policy varies.

- All use multiple third-party JS libraries loaded from a CDN.

- As large e-commerce sites, they have complex tech stacks both on the front- and backend, for example different backend languages for different site sections.

- Many are multilingual - however, the local versions use the same tech stacks (hosted on the same domain origin rather than on subdomains).

Largest Contentful Paint (LCP)

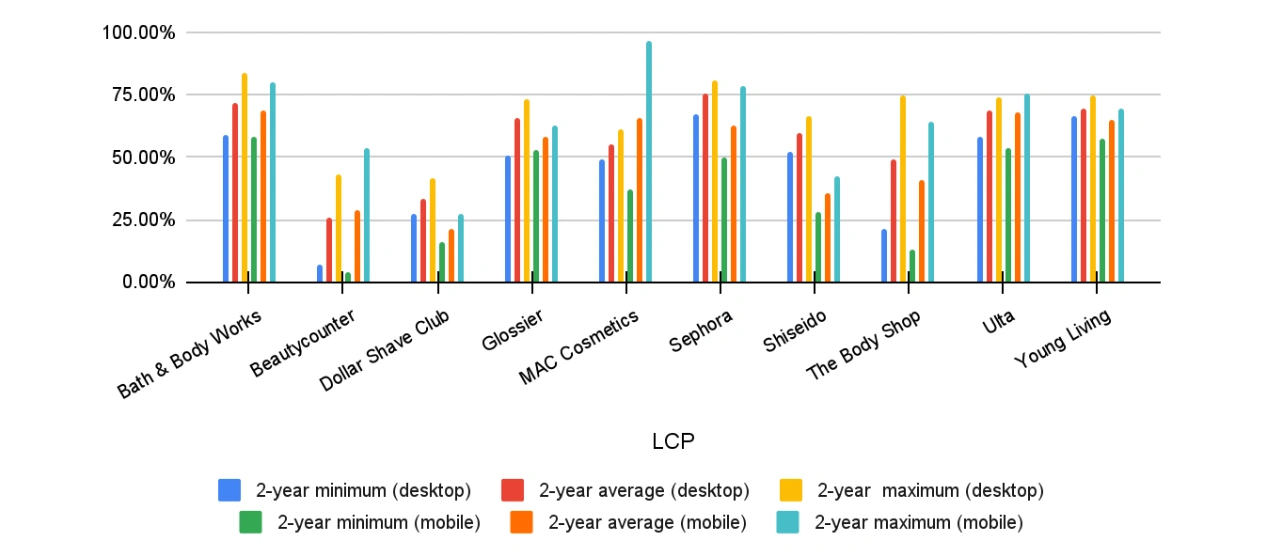

General findings:

- On desktop, only Sephora’s average passes the 75th percentile threshold.

- Two-year minimum and two-year maximum values mostly correspond with the two-year average values (except The Body Shop, in the bottom three for average and minimum while in the top three for maximum).

- The largest fluctuations were from Beautycounter (630%) and The Body Shop (348%).

- Only three sites increased their LCP score on desktop over the selected period (Dollar Shave Club, Glossier, MAC Cosmetics), and two on mobile (Dollar Shave Club, Young Living)

LCP: Highest scorers

Highest LCP on desktop: Sephora (75.12%), Bath & Body Works (71.95%), Young Living (69.59%)

- For all three, the largest content element is a product image (in a slider, grid, or standalone - latter on single product pages), and LCP images aren’t lazy-loaded

- Sephora defers off-screen images

- Bath & Body Works uses third-party CDN for images

- Sephora and Bath & Body Works use responsive images with

sourcetag andsrcsetattribute, and use the next-gen WebP image format - Notably, all use Jakarta (Java) EE on the backend

Highest LCP on mobile: Bath & Body Works (68.37%), Ulta (67.60%), MAC Cosmetics (65.85%)

- Bath & Body Works and Ulta have a Jakarta (Java) EE backend, while MAC uses Drupal

- Although the highest-scoring sites, they all still produce a long list of mobile “opportunities” in PageSpeed Insights, like the ‘avoid enormous network payloads’ notification (ie they load too many resources).

- Note that while MAC is the third-best on mobile, data was available only for eight months due to lower traffic.

Highest LCP: Desktop vs. mobile

- Only Bath & Body Works is included in both lists, and is the only website that uses a third-party CDN for images (Yottaa CDN for e-commerce). The other sites rely on CDNs for scripts, but not for images.

- Bath & Body Works is also the only one that doesn’t use a UI library on the frontend (it uses native JS) - others use Angular, React, or both.

- All have unused JS and render-blocking resources (blocking first paint).

- Sephora is first for desktop, but 5th for mobile because of a huge network payload.

LCP: Lowest scorers

Lowest LCP on desktop: Beautycounter (25.41%), Dollar Shave Club (33.43%), The Body Shop (49.42%)

- All three sites load unused JavaScript and The Body Shop also loads unused CSS.

- Even though it’s the lowest-scoring website for LCP, Beautycounter passes the ‘eliminate render-blocking resources’ criteria - as it heavily defers scripts using the

deferandasyncattributes. This shows that simply eliminating render-blocking resources is not enough to get a good LCP score. - Beautycounter and Dollar Shave Club use AVIF next-gen image format (instead of WebP like the highest-scoring sites) and also use third-party CDNs for images (Contentful’s CDN and IMGIX).

- Dollar Shave Club uses responsive images with the srcset attribute.

Lowest LCP scores on mobile: Dollar Shave Club (21.24%), Beautycounter (28.69%), Shiseido (35.85%)

- Notably, Dollar Shave Club, with the lowest score, doesn’t get the ‘avoid enormous network payload’ warning when even the best-scoring sites see this on mobile.

- Dollar Shave Club also heavily uses the scrset attribute (different size images for different viewports) - this is automatically done by IMGIX. So while using responsive images is a useful technique, it’s not enough in itself to achieve a good LCP score.

- Dollar Shave Club uses Node.js and Express (which isn’t designed for e-commerce) and Shiseido has an ASP.NET backend.

- Dollar Shave Club has a huge render-blocking JS file in the tag.

Lowest LCP: Desktop vs. mobile

- Beautycounter and Dollar Shave Club are included on both lists.

- Both use AVIF image format and automatically load images from their CMS’ CDN.

- However, they also use many optimization techniques (e.g. video formats for animated content).

Overall Best vs worst LCP

- Both the top and bottom three websites minify CSS and JavaScript and use many optimization techniques - however, e-commerce web development requires a range of external resources to provide essential functionality and uses lots of product images, which means heavy pages (see the ‘enormous network payload’ warnings on mobile).

- The sites’ tech stack seems to have a huge impact on LCP: - The top three on desktop and two of the three top mobile scorers use a Java EE backend. - The only site with top scores for both desktop and mobile (Bath & Body Works) doesn’t use a UI library on the frontend (others use React or Angular), so it has fewer dependencies and the scripts load faster

- The two lowest-scoring sites use a next-gen image format (AVIF) that’s still poorly supported by web browsers. Websites that use WebP, the competing next-gen image format, have better LCP results

- Optimization techniques don’t always produce the expected results - for instance, relying on the default image optimization techniques of content management systems may result in lower scores than having custom-built solutions.

- As e-commerce websites are highly dynamic, the LCP element frequently changes, so optimization requires a holistic approach.

To see the rest of our analysis, where we examine FID and CLS for our 10 e-commerce sites, read part two here.

How do I find my scores and start improving my Core Web Vitals?

The quickest and easiest way to grab your baseline scores is just by dropping your URL into PageSpeed Insights. You’ll immediately get a “Pass/Fail” overview and a breakdown of your performance for each Core Web Vital, as well as a list of “opportunities”. To monitor, diagnose and improve your scores on a lasting basis, you’ll want to consider investing in Real User Monitoring (RUM). You can jump into a free 14-day trial to see how RUM works and start seeing real-time data from your users.

For a full guide to diagnosing and improving Core Web Vitals, and making these metrics work for you, grab our Developer’s Guide to Core Web Vitals.